Meta has been ordered to pay a landmark fine of £280 million following a comprehensive investigation into the platform’s impact on the mental health of younger users. The penalty, issued by regulatory authorities, marks one of the most significant financial sanctions against a social media conglomerate to date.

The ruling follows years of intense scrutiny regarding how Instagram and Facebook manage the safety of minors. It signals a decisive shift in how international regulators approach corporate accountability within the digital sphere.

The fine was confirmed after an exhaustive three-year inquiry that examined millions of internal documents and thousands of user complaints. Investigators concluded that Meta’s parent company failed to implement sufficient safeguards to protect children from harmful content and addictive design features.

The financial penalty is accompanied by a series of mandatory changes to Meta’s operating procedures in the United Kingdom and Europe. These changes are designed to prioritise the psychological well-being of users under the age of 18 over commercial engagement metrics.

Mark Zuckerberg’s tech giant has faced mounting pressure from parents, educators, and mental health professionals who argue that the company has prioritised profit over the safety of its most vulnerable users. This fine represents the culmination of those concerns, moving the conversation from public debate into the realm of legal consequence.

The Regulatory Landmark and Financial Penalties

The £280 million fine was levied after regulators found Meta in breach of several key provisions within the Online Safety Act. This legislation, which has seen several iterations in recent years, empowers authorities to hold tech companies to a higher standard of care.

The investigation focused on the period between 2023 and 2025, during which Meta was alleged to have ignored warnings regarding its recommendation algorithms. These algorithms were found to be actively promoting content related to self-harm and eating disorders to accounts registered to minors.

In its final report, the regulatory body stated that Meta’s actions were not merely negligent but represented a systemic failure to address known risks. The fine is calculated based on a percentage of the company’s global turnover, highlighting the severity of the infractions found during the audit.

The ruling has sent shockwaves through the technology sector, with investors and rival platforms closely monitoring the fallout. For years, social media companies have operated under a model of self-regulation, but this fine suggests that the era of "move fast and break things" has met its legal limit.

Legal experts suggest that the £280 million figure is intended to serve as a deterrent. While Meta’s annual revenue is vast, a fine of this magnitude impacts the bottom line and necessitates a response to shareholders who are increasingly wary of regulatory risks.

Meta has indicated that it may appeal the decision, arguing that it has invested billions in safety and security features. However, the regulatory body remains firm, citing a "persisting gap" between the company’s public marketing and its internal engineering priorities.

The fine also includes a directive for Meta to undergo independent third-party audits every six months. These audits will ensure that the company is meeting its new obligations to monitor and remove harmful content targeted at children within specified timeframes.

Internal Findings and the Psychological Cost

At the heart of the case were internal documents leaked by whistleblowers and obtained through legal discovery. These documents revealed that Meta’s own researchers had flagged the negative impact of Instagram on the body image of teenage girls as early as 2021.

Despite these warnings, the company continued to refine features that encouraged "doom-scrolling" and social comparison. The investigation found that the "Likes" system and the "Discover" feed were specifically tuned to maximise time spent on the app, often at the expense of the user’s mental clarity.

Psychiatrists who testified during the inquiry provided evidence of a direct correlation between high social media usage and increased rates of anxiety and depression among UK school children. They argued that the "dopamine loops" created by these platforms are particularly damaging to the developing adolescent brain.

The report highlights several instances where the algorithm failed to distinguish between a user looking for support and a user being drawn into a spiral of negative content. In some cases, the system continued to serve harmful imagery to users even after they had attempted to report it.

Corporate accountability has become the central theme of this controversy. Critics argue that Meta’s business model is fundamentally at odds with child safety, as its revenue is directly tied to the amount of time users spend interacting with ads and content.

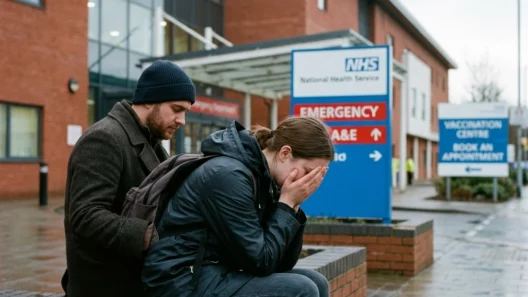

The impact on young users is described in the report as "profound and lasting." Testimonies from families who lost children to suicide linked to social media content were a pivotal part of the evidence presented. These accounts forced the regulators to move beyond technical violations and consider the human cost of algorithmic failure.

Meta has since announced a "new suite of supervision tools" for parents, but regulators argue these measures are too little, too late. The ruling demands that the burden of safety be placed on the platform’s engineers, rather than on the parents who are often outmatched by sophisticated software.

Industrial Accountability and the Legislative Shift

The £280 million fine is likely to be the first of many as governments worldwide tighten their grip on the "Wild West" of the internet. This case sets a legal precedent that digital platforms can be held financially responsible for the psychiatric outcomes of their users.

Other tech giants, including TikTok and Snap, are reportedly reviewing their own safety protocols in light of the Meta ruling. The industry is facing a turning point where safety features are no longer an optional add-on but a core requirement for operating in major markets.

The shift toward state intervention reflects a broader societal demand for protection in the digital age. Just as the automotive industry was forced to implement seatbelts and airbags, the social media industry is now being forced to implement psychological safeguards.

The legislative landscape is changing rapidly. Future updates to the Online Safety Act are expected to include even harsher penalties, potentially including criminal liability for senior executives if they are found to have knowingly ignored safety risks that lead to physical or mental harm.

Meta’s response to the fine will be a litmus test for the company’s future. If they choose to fight the ruling in a protracted legal battle, they risk further alienating a public that is increasingly sceptical of Big Tech’s motives. If they accept the fine and implement the changes, it could signal a new era of "ethical tech."

For now, the £280 million penalty stands as a stark reminder of the responsibilities that come with managing global communication networks. The focus remains on whether these financial measures will translate into a safer digital environment for the next generation of users.

The conversation regarding social media and mental health is far from over. As technology evolves: with the integration of AI and virtual reality: the challenges of protecting young minds will only become more complex. This fine is merely the opening chapter in a long-term effort to redefine the relationship between children and the screens they carry in their pockets.

The ruling takes effect immediately, with Meta required to submit its first independent audit report by the end of the next quarter. The eyes of the world are now on the Menlo Park headquarters to see if the social media giant will truly change its ways.