Imagine scrolling through your social media feed on a quiet Tuesday morning. You see a familiar face: a journalist you’ve watched for years, someone whose career is built on integrity and factual reporting. They are looking directly into the camera, speaking with their usual cadence, but the message is jarring. Instead of breaking down the latest tech trends or explaining a complex piece of legislation, they are enthusiastically promoting a "guaranteed" gambling loophole or a cryptocurrency scam.

This isn't a hypothetical scenario or a scene from a sci-fi thriller. It is the reality of the digital landscape in 2026. As AI technology has moved fast, the tools used to create deepfakes have become incredibly sophisticated, accessible and, unfortunately, weaponised. When a journalist’s face is stolen, it isn’t just a case of identity theft; it is a direct attack on the idea of truth. In the world of independent news uk, where we work to bring readers untold stories with honesty and clarity, this trend is especially unsettling.

The technology behind these scams has evolved from clunky, uncanny-valley animations to hyper-realistic video and audio clones. Scammers are no longer just sending poorly worded emails; they are hijacking the very faces and voices people trust to make sense of the world. By using the likeness of a respected reporter, criminals gain an immediate and undeserved sense of credibility, making their schemes more convincing and more dangerous.

The Mechanics of a Digital Heist

To understand the scale of the problem, it helps to look at how these digital puppets are made. Just a few years ago, creating a convincing deepfake required a huge dataset of images, high-end computing power and serious technical expertise. Today, the barrier to entry has fallen sharply. With just a few minutes of high-quality video, often pulled from public broadcasts, generative AI models can map a person’s facial expressions, mouth movements and even their distinctive vocal habits.

The process often starts with a source video. Scammers take a clip of a journalist reporting on a neutral topic. They then use AI software to swap the audio and manipulate the mouth movements to match a new, fraudulent script. Advanced voice cloning can copy tone, pitch and accent with alarming accuracy. That means a scammer can make a journalist appear to say almost anything, from backing a suspicious betting app to pushing false claims about a public figure.

What makes this especially hard to tackle is speed. A scammer can produce dozens of variations of a deepfake video in a single afternoon, tailoring them to different audiences or platforms. They use bots to spread the clips quickly, often targeting people who may be more vulnerable to promises of easy money. By the time the journalist or their organisation spots the video and reports it, the damage may already be done.

For those committed to independent news uk, this technology is a real double-edged sword. AI offers useful tools for analysis and production, but its misuse creates a climate where the public starts to question everything they see. The untold stories that matter risk being buried under synthetic noise, which makes honest reporting even more important.

The Impact on Trust and Truth

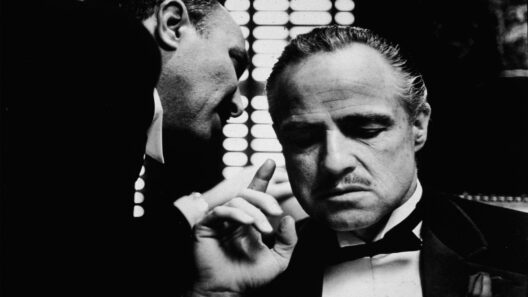

The theft of a journalist’s likeness goes far deeper than a simple financial scam. It strikes at the heart of the relationship between the media and the public. Journalism relies on a foundation of trust. When you see a reporter on your screen, there is an implicit understanding that they have verified their facts, adhered to ethical guidelines, and are presenting information in good faith. Deepfakes shatter this contract.

When a viewer is tricked by a deepfake, the immediate reaction is often anger and a sense of betrayal. Even after the video is debunked, doubt can linger. People start to wonder: "If that video was fake, how do I know the next one isn't?" This leads to a phenomenon known as the "liar’s dividend". In simple terms, the existence of deepfakes makes it easier for people to dismiss real evidence as fake. If anything can be fabricated, then truth itself becomes easier to challenge.

This erosion of trust is exactly what scammers and bad actors want. It creates a chaotic information environment where it is easier to manipulate public opinion and exploit individuals. For journalists, having your face used to promote a gambling scam is a professional nightmare. It can tarnish a hard-earned reputation in minutes. It also places a heavy burden on news organisations to constantly monitor for infringements and defend their staff against digital impersonation.

The psychological toll on the victims shouldn't be underestimated either. Seeing a "digital twin" of yourself saying things you would never say is a deeply violating experience. It feels like a loss of agency, as if your identity has been hijacked and put to work for someone else's profit. In the pursuit of bringing untold stories to light, journalists often put themselves in the public eye, but they never signed up to be the unwilling faces of criminal enterprises.

Navigating the Hall of Mirrors

So, how do we protect ourselves in this hall of mirrors? As deepfakes become more realistic, the responsibility falls on several different groups: technology platforms, regulatory bodies, and, most importantly, the audience. We are entering an era where "seeing is believing" is no longer a safe mantra. Instead, we must adopt a mindset of healthy scepticism and digital literacy.

Technology platforms have a massive role to play. They need to invest more heavily in deepfake detection tools that can automatically flag synthetic content. There is also a push for "digital watermarking" or cryptographic signatures that can verify the origin of a video. If a video claims to be from a reputable news source but lacks the correct digital credentials, it should be treated with extreme caution. However, as detection technology improves, so do the methods used to bypass it, creating a constant arms race between scammers and security experts.

Legislation is another key piece of the puzzle. We need clear legal frameworks that treat deepfake impersonation with the seriousness it deserves. This isn't just a copyright issue; it’s a form of fraud and identity theft. Lawmakers need to ensure that there are real consequences for those who create and distribute malicious deepfakes, and that victims have a clear path to getting the content removed and seeking justice.

Finally, as consumers of news, we have to become more discerning. When you encounter a video that seems out of character for a journalist or makes claims that sound too good to be true, take a moment to verify it. Check the official social media channels of the journalist or their news organisation. Look for inconsistencies in the video: sometimes the lighting around the mouth looks slightly off, or the blinking patterns seem unnatural. Most importantly, look at the source. Is the video being shared by a verified account, or is it coming from a random page with zero followers?

The rise of deepfakes is a clear reminder that the digital world keeps changing fast. While we continue to value independent news uk and the untold stories that deserve proper attention, we also need to stay alert to the ways technology can be used to mislead. The battle for truth in the age of AI is only getting more complex, and it affects everyone who consumes news.

The challenge of deepfakes is serious, but it is not impossible to address. The focus now needs to stay on transparency, education and stronger tools for verifying the media people consume. Protecting the faces and voices of journalists is essential to maintaining trust in public information.

As technology continues to blur the line between the real and the synthetic, factual reporting remains the strongest defence. A more informed and careful public will be better placed to spot deception and protect the value of genuine journalism.