Generative AI has shifted from a niche creative hack to a default feature in 2026, with image, video, audio and design tools now bundled into the same everyday apps people use for work and social.

The trend is being driven by three forces happening at once: better models that follow instructions more reliably, cheaper and more available compute, and a fast-growing expectation from clients that content can be produced and iterated in hours, not weeks.

In practice, “AI art” in 2026 is less about a single viral aesthetic and more about a production system that sits behind everything from album covers and ad storyboards to game assets and explainer videos.

That scale is forcing old questions to become operational ones: who owns the output, who consented to the inputs, and how audiences can trust what they are seeing.

How AI tools are changing day-to-day creative work

Creative work is being reorganised around speed, versioning and cross-format output.

A common workflow now starts with a rough concept generated in minutes, then moves through rapid variation: different compositions, lighting, locations, demographics, wardrobe and brand colours, all produced as sets rather than single “finals”.

That shift is especially visible in commercial design.

Agencies increasingly deliver “option libraries” to clients, not one hero concept, because A/B testing and multi-platform publishing reward quantity and tight iteration loops.

Localisation has become a baseline expectation.

Brands want the same creative idea rendered for different regions, languages and cultural cues, and AI-assisted pipelines can generate those variants quickly, then hand off to human designers for final approvals and compliance checks.

Video has followed the same direction, but with higher stakes.

Pre-production work that used to take days—storyboards, animatics, mood reels, and rough scene blocking—is now routinely produced in-house by small teams, letting directors and producers test pacing and tone before money is spent on shoots.

In music and audio, AI tools are increasingly used for drafts and structure.

Creators use them to sketch hooks, harmonies or sound palettes, then record final vocals and instruments themselves or with session musicians, keeping control over identity while cutting early-stage friction.

The other major change is organisational.

More of the workflow is cloud-based, which lowers the barrier for freelancers but also makes teams dependent on platform subscriptions, usage caps and shifting terms of service.

A single model update can change a “house style” overnight, which has led some studios to lock down model versions for ongoing campaigns to avoid inconsistent output.

A quieter cultural shift is happening around skill.

Basic competence now includes prompt craft, reference gathering, and knowing how to steer outputs with constraints—much like knowing Photoshop layers became a standard expectation years ago.

At the same time, traditional skills have not disappeared; they have moved up the chain, with human attention concentrated on concept, editing, taste and approvals.

Authenticity, provenance and the push for disclosure

The biggest tension in 2026 is not whether AI can make convincing images.

It is whether anyone can prove what an image is, where it came from, and what was changed along the way.

That matters in commercial work, where clients want to understand licensing and risk, and it matters in public life, where synthetic or heavily edited visuals can move faster than corrections.

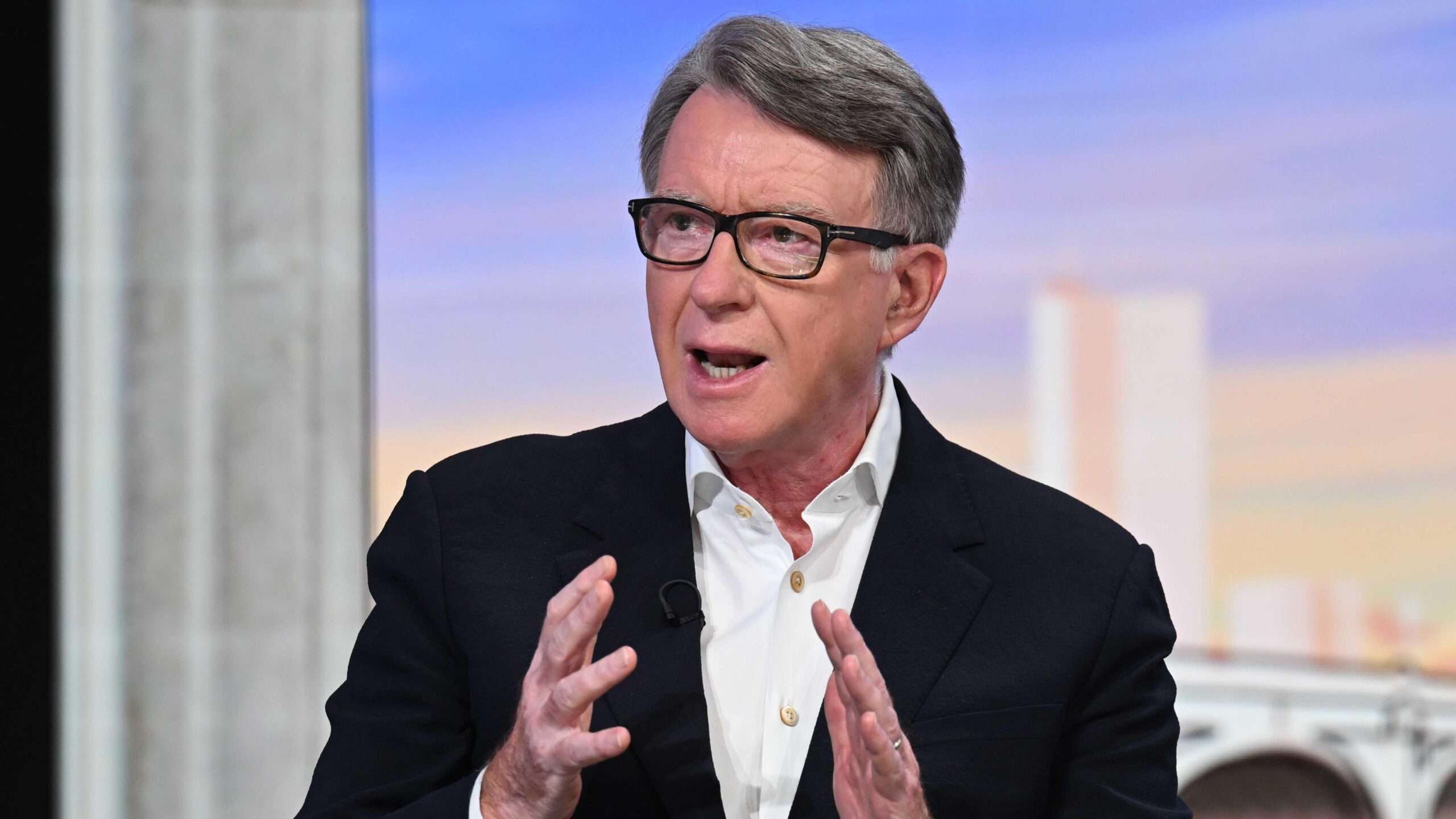

As a result, disclosure has become a practical workflow step, not a moral debate.

Some platforms and marketplaces now expect creators to declare whether AI was used, and some clients write that requirement into contracts, particularly in advertising, political communications and editorial illustration.

Provenance tools are expanding, but they remain uneven.

Metadata and watermarking systems can be stripped, screenshots remove context, and social platforms often compress files in ways that break traceability.

Even when labels exist, they can be misunderstood by audiences, who may read “AI-assisted” as either harmless editing or total fabrication.

Creators are adapting in a few distinct ways.

One approach is documentation: keeping prompt logs, source references, and version histories, then delivering them as part of the project handover, similar to how photographers deliver RAW files or how video editors deliver project files.

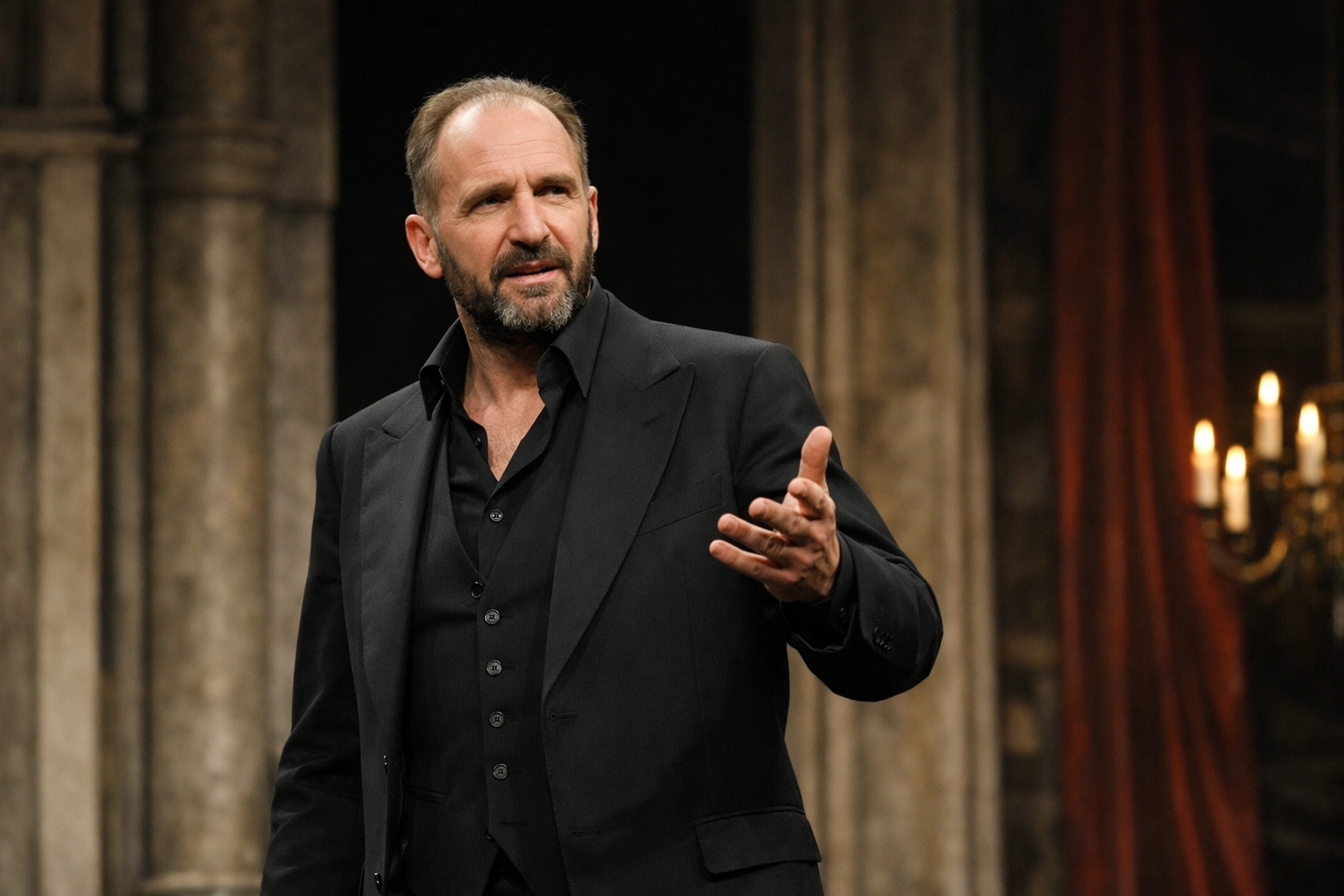

Another is a deliberate “human signal”.

Some artists choose rougher textures, visible brushwork, or collage-like edits to show active authorship, while others publish process clips to demonstrate that the work was directed rather than simply generated.

A third is the move towards consent-led sourcing.

More creators now insist on using licensed datasets, commissioned photography, or self-shot reference material to reduce legal exposure and ethical uncertainty, especially when the final work resembles a recognisable artist, performer or public figure.

Culturally, there is also a taste split.

A portion of audiences have become numb to “perfect” visuals and are gravitating back to messy, specific and local work—zines, analogue photography, regional design cues, and community-led art scenes—because they feel harder to fake and easier to trust.

What wider adoption means for jobs, law and culture

The employment impact in 2026 is most visible in routine creative tasks.

Entry-level production work—basic retouching, background generation, simple logo explorations, and first-draft layouts—can now be done faster with smaller teams, which changes how people traditionally got their start.

That does not remove the need for human creatives, but it compresses the ladder.

More value is shifting to roles that can own outcomes end-to-end: creative direction, brand guardianship, narrative development, and the ability to edit, curate and sign off under pressure.

New job categories are also forming.

Studios increasingly hire people for model steering, dataset curation, prompt-to-production workflows, and compliance review, especially in sectors where mistakes are expensive, such as regulated advertising, healthcare communications and politics.

Law is still struggling to match the pace of adoption.

Copyright and training-data disputes remain active across jurisdictions, and the practical question for businesses is often not “is this art” but “is this safe to publish”.

Teams are paying closer attention to likeness, voice and style imitation risks, particularly when a generated output resembles a known creator or when prompts reference living artists.

The cultural argument has widened beyond artists’ rights.

In 2026, AI art sits inside broader concerns about authenticity, labour, and power: who benefits when production is automated, who bears the risk when something goes wrong, and who gets to decide what counts as original.

There is also a geopolitical angle.

Model development is concentrated in a small number of countries and companies, while the outputs circulate globally, raising questions about whose cultural defaults are being encoded into tools that shape visual norms worldwide.

The net effect is that “AI art” is no longer a single trend to love or hate.

It is an infrastructure layer for modern media, and the next phase will likely be defined less by novelty and more by governance: clearer licensing, stronger provenance, and industry norms that explain, in plain terms, when a human made something, when a model helped, and why that distinction matters.