The digital landscape in 2026 feels a bit like a hall of mirrors. We have reached a point where seeing is no longer necessarily believing, and that is a problem for anyone who spends time scrolling through social media or catching up on the news. While we have spent years worrying about fake news and doctored photos, the technology has taken a massive leap forward. We are now facing the era of the high-definition, AI-generated deepfake. This isn't just about fun face-swaps or making a celebrity say something silly; it has become the sharp end of a very dangerous spear in the world of digital fraud.

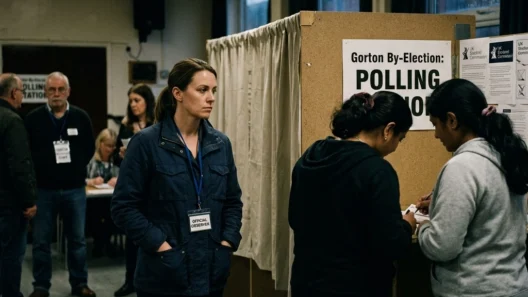

Lately, there has been a particularly nasty trend emerging across the UK. Scammers are now using sophisticated AI to clone the voices and likenesses of trusted media personalities to peddle illegal gambling schemes. It is a cynical exploitation of trust that targets people looking for legitimate financial advice or those who enjoy following independent news UK outlets. By hijacking the faces of people we usually rely on for the truth, these fraudsters are creating a crisis of confidence that goes far beyond a simple lost bet.

The rise of these scams highlights a series of untold stories about how easily our digital identities can be weaponised. When a journalist’s face appears on your feed, explaining a "guaranteed" way to beat a casino algorithm or an "exclusive" new betting app that the government doesn't want you to know about, it carries a weight of authority. It feels like an investigation or a breakthrough, rather than a trap. But behind the realistic blinking eyes and the perfect British accent lies a complex network of AI tools designed to separate people from their hard-earned money.

When Reality Becomes Optional

The jump in quality we have seen in deepfake technology over the last twelve months is nothing short of staggering. In the early days, you could usually spot a fake by looking at the mouth movements or noticing a strange flicker around the eyes. Those days are largely behind us. Today’s AI models can recreate a person’s unique mannerisms, their speech patterns, and even the subtle way they tilt their head when they are making a point. When this tech is applied to a well-known journalist from a respected independent news UK platform, the results are terrifyingly convincing.

The process usually starts with "scraping." Scammers use bots to collect hundreds of hours of video footage of their target. They look for journalists who have a high level of public trust: people known for uncovering the truth or sharing untold stories. Once they have enough data, they feed it into a generative adversarial network (GAN). This is where two AIs work against each other: one creates the fake video, and the other tries to spot the flaws. They do this millions of times until the "fake" is indistinguishable from the "real" to the human eye.

The goal isn't just to make a video; it's to create a narrative. These scams often mimic the visual style of a breaking news report. They use the same fonts, the same lower-third banners, and the same studio lighting that you would see on a legitimate broadcast. By the time the viewer realises they are watching a deepfake, they have often already clicked the link in the description. The casual, friendly tone used by the AI makes the viewer feel like they are being let in on a secret, creating a false sense of intimacy and security.

The Mechanics of the Gambling Trap

So, why gambling? The reason is simple: it is a high-emotion, high-reward industry that already operates in a grey area of the internet. By using an AI deepfake of a journalist, scammers can claim they have discovered an "untold story" about a glitch in a betting site's software or a secret "sure-fire" strategy developed by mathematicians. Because the face telling the story is one the viewer recognises from legitimate independent news UK coverage, the natural scepticism we usually have for "get rich quick" schemes is lowered.

These illegal gambling scams are particularly insidious because they often lead victims to "cloned" websites. Once you click that link, you aren't just giving away your money to a bad bet; you are often handing over your full credit card details and personal identity information to a criminal syndicate. The AI video is just the hook. The real damage happens in the backend, where sophisticated phishing systems harvest data at an industrial scale. We have seen a massive surge in this type of fraud, with some reports suggesting that AI-enabled scams have increased by over 1,000% in the last year alone.

The economics of this are also shifting. It used to take a team of skilled editors and a lot of time to create a convincing fake. Now, "Deepfake-as-a-Service" kits are available on the dark web for as little as a few hundred pounds. A scammer doesn't need to be a tech genius; they just need a subscription to a dark LLM (Large Language Model) and a target. They can generate dozens of different versions of the same scam, testing which journalist's face gets the most clicks and which gambling "hook" converts the best. It is a data-driven approach to crime that moves faster than traditional law enforcement can keep up with.

Protecting the Truth in a Digital Age

As these scams become more prevalent, the responsibility for staying safe is increasingly falling on the individual. However, it shouldn't just be about being "careful." We need to understand the tells of this new generation of fraud. Even the best AI occasionally struggles with "temporal consistency": this is a fancy way of saying that sometimes the person's glasses might flicker for a microsecond, or their earring might momentarily merge into their neck. If a video feels slightly "off," it probably is.

Another key is to look at the source. Legitimate independent news UK organisations will never use their journalists to promote gambling apps or secret betting loopholes. If a famous face is telling you to put money into a specific platform, it is a massive red flag. The "untold stories" worth listening to are the ones that come through verified channels, not sponsored ads that appear out of nowhere on your timeline. It is always worth taking thirty seconds to go to the official website of the news outlet to see if the story actually exists there.

Beyond individual vigilance, there is a growing conversation about the role of the platforms themselves. Social media companies have the tools to detect AI-generated content, but the sheer volume of uploads makes it a constant game of cat and mouse. There is a pressing need for better digital watermarking and "provenance" standards: systems that can prove a video was actually recorded by a human on a specific camera. Until those systems are universal, we have to treat every "too good to be true" video with a healthy dose of suspicion.

The era of the deepfake is a challenge to our shared reality. When the voices we trust are used to lie to us, it erodes the very foundation of how we consume information. By staying informed and understanding how these tools are being used, we can protect ourselves from the financial and emotional toll of these scams. Digital fraud is moving into a new dimension, and it is up to all of us to ensure that the truth remains more powerful than the code.

The reality of digital fraud in 2026 is that it is no longer about simple deception; it is about the wholesale fabrication of trust. By being aware of these tactics, we can keep the focus on real stories and genuine news.